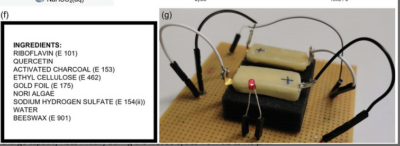

A team of researchers at the Istituto Italiano di Tecnologia (IIT-Italian Institute of Technology) has created a totally edible and rechargeable battery, starting from materials that are normally consumed as part of our daily diet. The proof-of-concept battery cell has been described in a paper, recently published in the Advanced Materials journal. The possible applications are in health diagnostics, food quality monitoring and edible soft robotics.

Spotted via Completely Edible Rechargeable Battery Created;

Actually, of course, we won’t feed the robot the edible cells, we’ll eat them ourselves as part of medical devices and maybe ultimately food-quality monitors. As I.K. Ilic, V.Galli, L. Lamanna, P. Cataldi, L. Pasquale, V.F. Annese, A. Athanassiou, M. Caironi. An Edible Rechargeable Battery. Advanced Materials (2023) explains:

In this paper, we present the first edible rechargeable battery based only on organic redox-active materials. All the materials used in the formation of the battery are common food ingredients and additives that humans can eat without harm in large amounts, >100 mg per day. First, we prepared a composite of redox-active food additives and ingredients with activated carbon, a conductive food additive. This allows electrons to flow to and from the redox-active centers. Upon testing the electrochemical performance of these composites, we established two alternatives for cathode and anode materials. We chose the highest and the lowest redox reduction potential materials, namely riboflavin (vitamin B2) and quercetin, and assembled the battery using edible current collectors and packaging. Such a battery can be used to power edible electronic devices operating outside the human body, as well as those operating inside, once the packaging is adjusted for the application. While rechargeable properties of the battery might not be useful for short-lived applications inside the human body, edible devices operating outside the human body can be recharged, prolonging their lifetime. This long-sought achievement not only enables the development of edible electronics, but can also pave the way for the replacement of commercial batteries in ingestible devices, reducing their risk upon ingestion.

Meanwhile,

Q: How do you classify the role of an edible-battery tester?

A: It’s a high-power job.

These things are DANGEROUS.

These things are DANGEROUS.