I was very honored to be asked to write the preface for Un droit de l’intelligence artificielle: entre règles sectorielles et régime général. Perspectives de droit comparé (Céline Castets-Renard, Jessica Eynard, eds.) which should be forthcoming shortly. An English edition is due to follow in a few months.

Since a Preface is short, I decided to compose it in French, relying on the able editors to correct any infelicities and the occasional failure to agree gender or the like. The result is not my first foreign-language publication, nor even the only one due this year, but it is the first where the foreign version is not a translation. Here it is en version originale:

L’intelligence artificielle sera bientôt, si elle ne l’est déjà, une des technologies les plus importantes et aussi une des plus dangereuses que nous n’ayons jamais rencontrées. Comme William Gibson nous avertit, « l’avenir est déjà ici, il n’est tout simplement pas encore uniformément réparti ».

L’enfant de l’informatique et des mégadonnées, l’apprentissage automatique, dit l’intelligence artificielle (IA), a infiltré plusieurs domaines, y compris des décisions gouvernementales (soit les bénéfices sociaux ou l’administration de la justice), les services de santé, le champ de bataille, et des tentatives de manipulation des élections et de l’espace public, ainsi que les marchés financiers.

Actuellement, les systèmes d’IA ont tendance à être opaques. Jusqu’à ce que nous ayons appris à en construire de meilleurs, il restera difficile d’identifier les informations spécifiques les plus susceptibles de déterminer une conclusion donnée. De même, sans schéma de provenance des données, il restera difficile de détecter les caractéristiques subtiles qui peuvent entraîner diverses formes de discriminations involontaires, mais néanmoins indésirables, et même illégales.

L’IA soulève de nombreuses questions sociales, tel que l’avenir du travail. Tous, des ouvriers d’usine aux professionnels tels que les médecins et les avocats, pourraient voir leurs emplois transformés. Ce que nous ignorons encore est de savoir si l’IA deviendra notre conseiller, notre collègue, notre patron (et notre surveillant qui voit tout), ou si peut-être certains d’entre nous ne travaillerons plus du tout parce que les IA auront pris nos emplois, étant à la fois plus précises et plus perspicaces.

Nous juristes avons tendance à considérer que le rôle de la loi et de la réglementation est au cœur de l’enquête sur l’IA. Je reconnais que les choix sociaux concernant la configuration et le déploiement de l’IA ne devraient pas être laissés au marché sans contrôle légitime. Mais ce qui devrait passer en premier, ce sont les questions éthiques liées à l’IA. Les principes éthiques de l’introspection et de l’engagement sont essentiels pour tous ceux qui construisent, entretiennent, réglementent ou utilisent l’IA et, encore plus certainement, lorsque nous considérons les intérêts de ceux qui font l’objet des actions prises par l’IA. Mais cela doit être fait de manière soignée. Actuellement, la prolifération des standards éthiques aux États-Unis, par exemple, permet aux moins scrupuleux de chercher le standard qui leur permettra de revendiquer la vertu sans la pratiquer.

Même si l’on croit qu’il n’y a aucune chance que la technologie actuelle produise une IA consciente, il est concevable que, tôt ou tard, une IA puisse si bien imiter une personne que nous ne pourrions pas discerner le silicium sous le sourire. Cela finira plus probablement dans la fraude que dans la sensibilité. Bien sûr, il pourrait devenir commode d’adopter une fiction juridique dans laquelle nous attribuons certains aspects de la personnalité à l’entité computationnelle artificielle, tout comme nous le faisons pour certains aspects d’entités économiques artificielles – les entreprises. Dans tous les cas, les questions essentielles seront ce que nous voulons que nos machines fassent, et ne fassent pas, des questions qui devraient éclairer le chemin vers l’établissement des règles qui encourageront des résultats favorables.

Les problèmes éthiques et juridiques créés par l’IA sont interdisciplinaires, mais pour compliquer encore les choses, ils sont également transnationaux. Premièrement, n’étant que des données et des logiciels, à la fois les algorithmes et les méthodes de formation pour générer de nouveaux algorithmes, peuvent être partagés dans le monde entier en open source, dans la littérature académique, ou vendus au-delà des frontières. D’un autre côté, certains pays considèrent les informations sur leurs citoyens, par exemple les données nationales sur la santé, comme une ressource stratégique faisant partie de la politique économique nationale… mais les données et le code sont difficiles à enfermer.

Deuxièmement, la réglementation de l’IA est dans une période de débat, de développement rapide, et de concurrence. L’Union européenne, les ÉtatsUnis, la Chine et de nombreux autres pays sont confrontés au double défi de contrôler l’IA tout en l’encourageant – par peur d’être laissé derrière dans ce qu’ils décrivent comme une compétition commerciale et militaire. Dans le cas de l’UE, le RGPD crée chez certains un appétit bien compréhensible pour une seconde occasion de la création d’une norme transnationale, c’est-à-dire un système potentiellement extraterritorial, même viral.

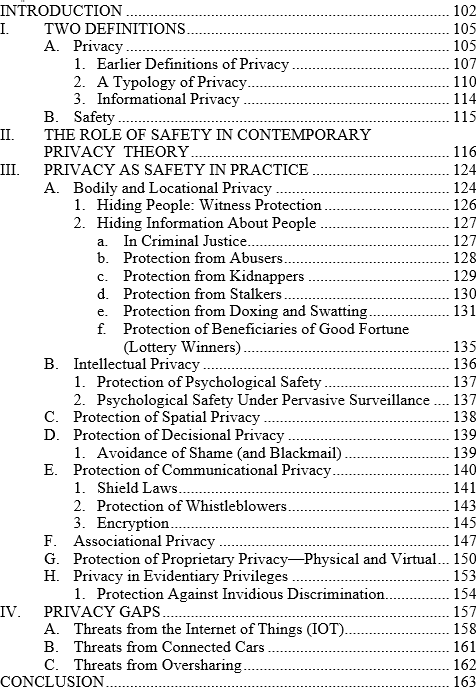

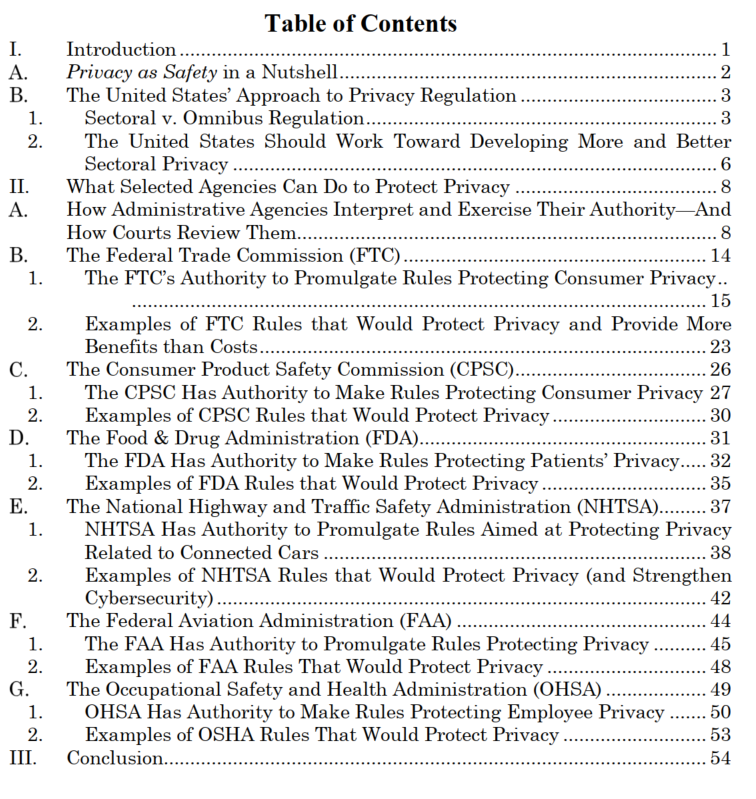

L’IA doit-elle être réglementée en tant que technologie, de haut en bas ou de manière sectorielle par des experts versés dans les différents domaines où l’IA sera déployée ? Je prédis que l’IA deviendra trop importante, trop dominante, pour nous permettre d’avoir un seul organisme de réglementation, car cet organisme contrôlerait non seulement la majeure partie de l’économie, mais une grande partie du gouvernement, ainsi que de nombreux aspects de la vie privée. Mais cela ne signifie pas que des efforts réglementaires plus ciblés ne puissent ou ne doivent pas être guidés par des principes généraux et, en effet, nous pourrions avoir besoin à la fois des principes généraux et des règles ciblées pour maximiser les avantages de l’IA tout en minimisant ses effets secondaires.

Quelle que soit la nature de la réponse de la société (ou devrais-je dire des sociétés ?) aux bénédictions et aux malédictions mitigées de l’IA, il est clair que nous ne sommes qu’au début d’une longue évolution. Je suis convaincu que nous avons beaucoup à apprendre les uns des autres, tant au niveau transnational qu’à travers les disciplines académiques et techniques. Les savants et experts contributeurs à cet ouvrage se sont lancés dans ce projet essentiel d’enseignement et d’apprentissage, et nous devons tous leur en être reconnaissants.

Coral Gables, Floride, États-Unis

Avril 2022

Amusingly, when I agreed to write this, I was not aware that the awesome editors were planning an English edition. I was thus a little surprised when they offered to translate the French into English for me, but I said I would do it myself.

We’ve posted a revised draft of

We’ve posted a revised draft of